Game-changing cloud computing trends to drive business

Cloud computing has become one of the fastest-growing areas in the IT market and we want to help companies choose the right cloud computing technology for their business

In this article, we discuss the major cloud computing trends of 2020, including the rise of hybrid clouds, the growth of AI in cloud services, and the increasing focus on security. We provide insights into how these trends are shaping the future of cloud technology.

The year 2020 has become “the year of the future" due to the active development of artificial intelligence, the Internet of Things (IoT), and cloud technologies. Over the past five years, cloud computing has become one of the fastest-growing areas in the IT market. Total spending on public cloud services is projected to grow by 33.9% over the next five years.

Clearly, businesses must adapt to and adopt these new technologies, if they want to remain viable and profitable over this year. While cloud computing services have taken hold in nearly every industry, it can be difficult to to navigate all of the different cloud computing service delivery models. We at Evrone have carried out a research concerning the future of cloud computing architecture. We would like to share the findings with you, and help you determine which options are right for your business.

Growth in hybrid cloud popularity

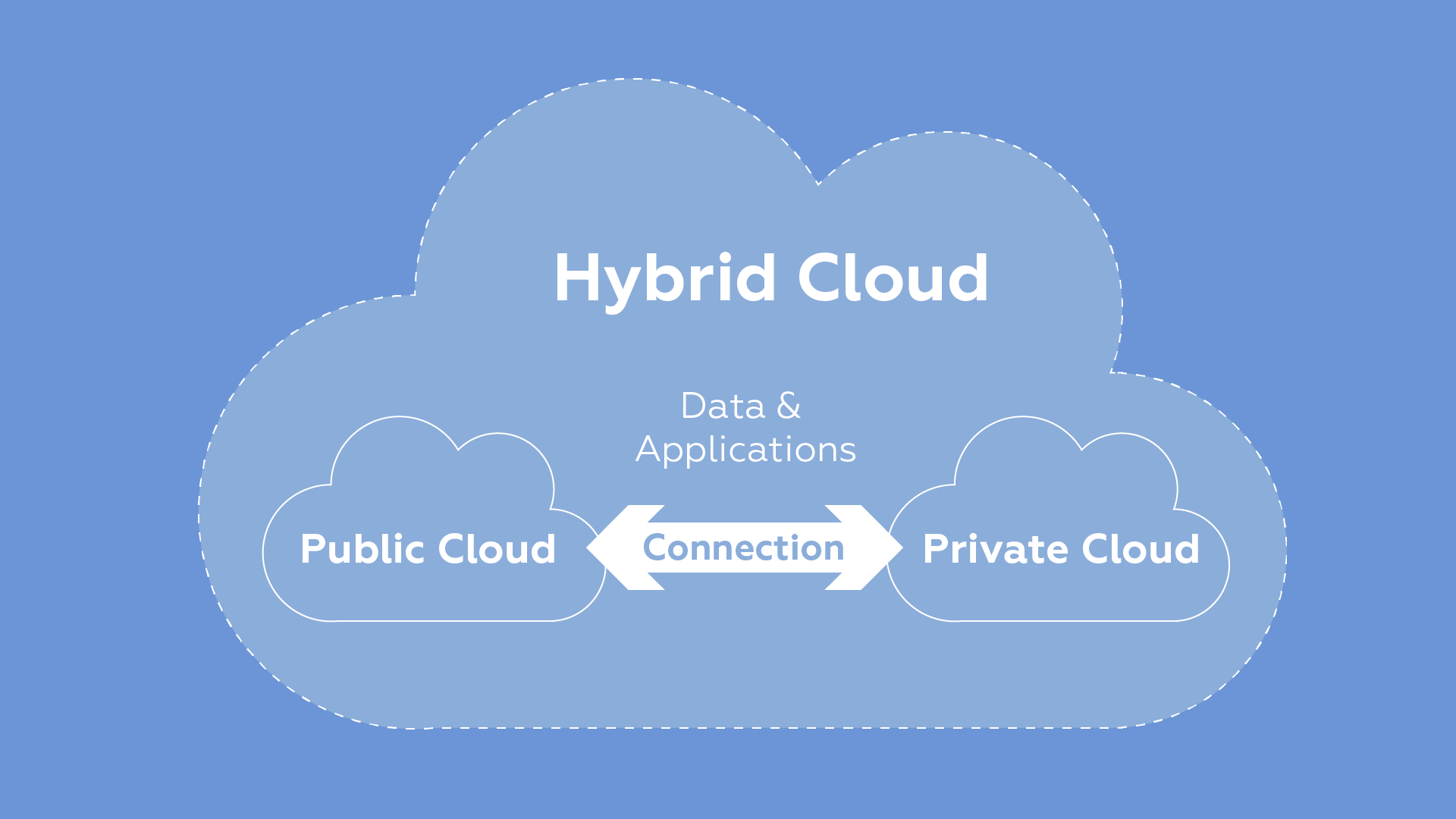

The hybrid cloud is a modern IT infrastructure, utilizing a combination of both private and public cloud infrastructures. They remain separate entities, but are tied together by technology that supports data and application portability. Using a hybrid cloud helps to minimize scaling expenses, while also ensuring the security of confidential enterprise data.

Research firm Gartner predicted that, in 2020, 75% of organizations will switch to a multi-cloud or hybrid cloud model of work, and by 2023 the hybrid cloud market will be growing by 17% per year. It’s no surprise, since the use of cloud technologies has been actively growing for several years. With modern technologies, there is no need to invest in more hardware and a system administrator.

While there are many different hybrid cloud examples, the illustration below shows that the two infrastructures are linked together to form a cohesive environment that facilitates the transfer of data and applications back and forth. This allows for increased mobility and flexibility, while maximizing data security, since the information never actually leaves the cloud infrastructure.

Artificial Intelligence comes to the forefront

In 2020, there has been a significant increase in the use of artificial intelligence. From energy savings, to pattern detection during server or network hardware failures, AI can be used to solve problems long before they occur. Thanks to AI, data centers can learn from previous trends and more efficiently distribute workloads during peak periods.

AI also helps organizations address skills shortages. Gartner predicts that deficiencies in infrastructure and work skills will cause substantial business disruptions in 75% of organizations. AI will play a significant role in automating many of the tasks that require hours of manpower today. AI-based services and solutions will be delivered using cloud technology.

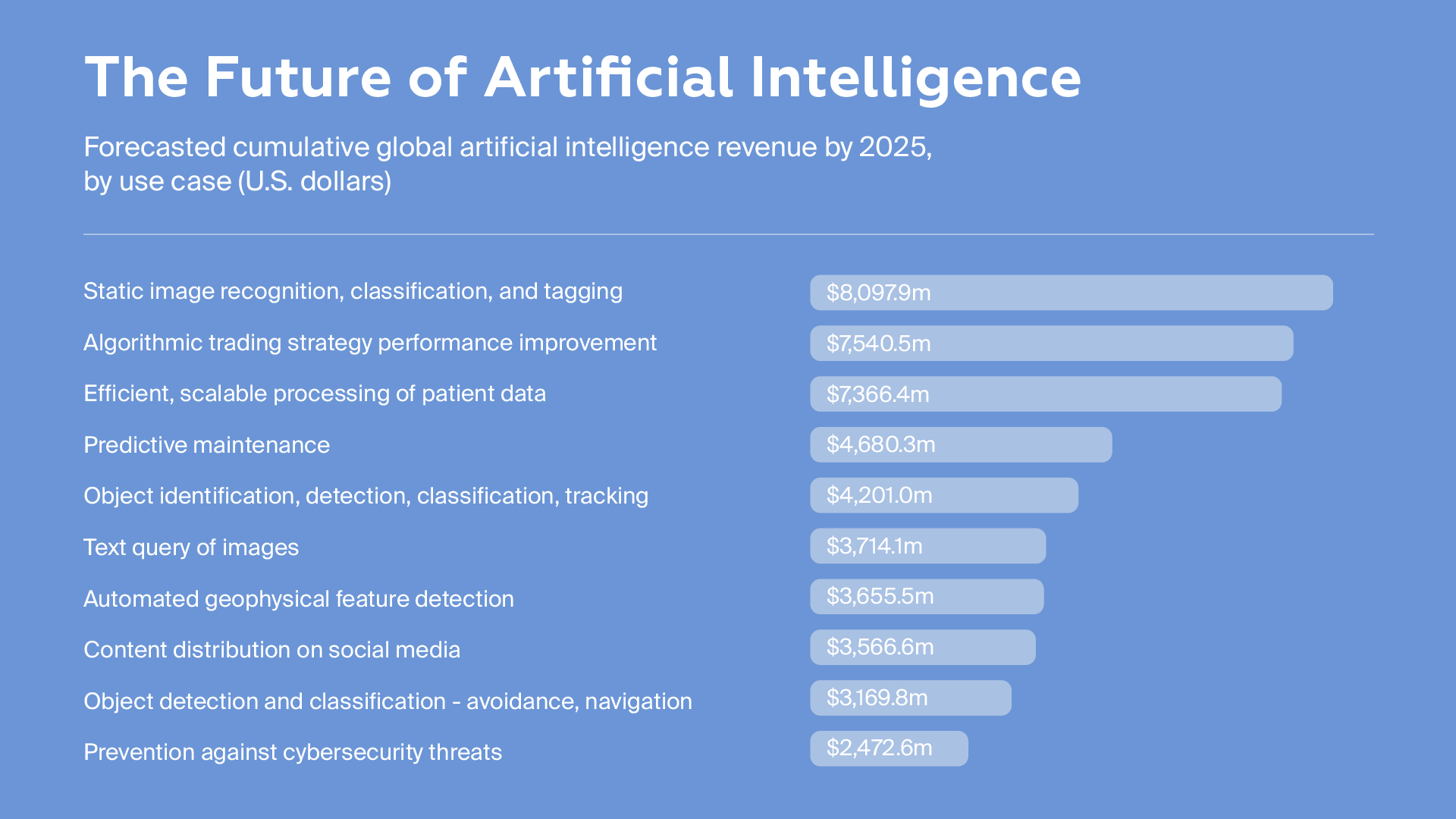

Projections for 2025 indicate that the top ten use cases for AI will generate over $48 billion globally. AI will affect nearly every industry, from social media to healthcare to cybersecurity. Revenue from AI image classification and recognition alone is expected to top $8 billion.

Containers are a must

"Write once - run anywhere." Organizations are quickly getting on board with this principle of containers.

Gartner predicts that by 2023, more than 70% of companies will use more than two container applications in their work, which is 50% more than in 2019. According to the IDC, 95% of new microservices will be deployed in containers by 2021. Containers simplify the deployment, management, and operational challenges that hybrid clouds often generate, and, as containers and cloud computing go hand in hand, both of these technologies will show noticeable growth over the next few years.

In 2020, the principle of container development has become the de facto standard for creating both large and small projects. Almost all new projects in our company have a DevOps specialist and use containerization based on Kubernetes.

Containerization is highly beneficial for IT companies. By using containers, developers can increase app productivity and scalability and speed up deployment. Portability is increased, allowing for high app performance in any environment. Containers also ensure business continuity, since the containers all run independently, and they can help businesses maximize their security through automatic application management and installation.

The rise of Edge computing

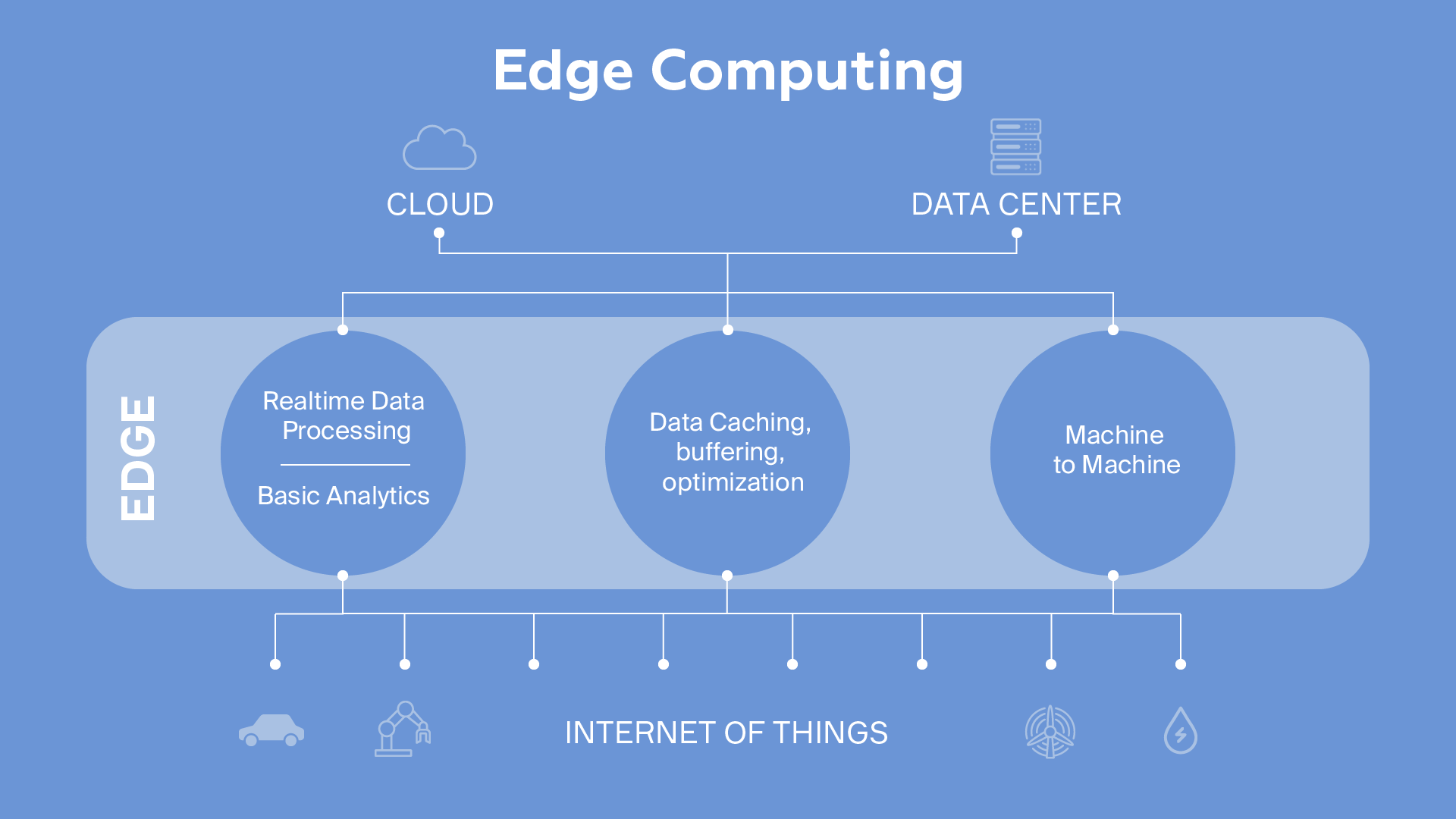

In general, this technology refers to remote monitoring and data processing directly on IoT devices. Edge computing may seem like a novelty, but in fact, the principle of its operation is illustrated by smartphones, tablets, sensors, robotics, production workshops, and other massively distributed analytics devices and technologies for computing "on the edge."

A survey conducted by Cisco predicts that, by 2022, the number of devices connected to IP networks will be three times the population of the planet. A Gartner study projects that the total number of IoT gadgets will reach 25 billion by 2021, producing a combined massive amount of data.

According to McKinsey research, 127 new IoT devices connect to the Internet every second. Companies have a need for small data centers that are located as close as possible to data generation sites. Gartner claims that, by 2025, 75% of the data generated by companies will come from and be stored on the Edge (in the place where the data flows are generated). An IDC FutureScape report says that, in 2022, 40% of enterprises will double their spending on IT development at Edge locations.

The rise of Edge computing is a predictable phenomenon. In large cities, tens of thousands of cameras are installed on the streets that are capable of recognizing license plate numbers, and eventually, people's faces. Why transfer terabytes of "raw" data somewhere else, when a small computing module in the camera itself can process the video, highlighting the necessary elements from the stream? Already clean data, identifying people, numbers, and images, can be sent to the data center instead.

Intermediate processing is already being actively implemented. On fishing ships, there is no stable connection with servers on land, so a server rack is installed on the vessel to analyze the photos and videos and assess the quality of fish. From cars to smart homes to manufacturing, the power and reach of Edge computing is enormous. There are already technologies on the market for remote code delivery to servers, including Amazon's Greengrass.

Increasing demand for DRaaS services

DRaaS stands for disaster recovery as a service. It uses cloud and on-premise resources to back up important information and applications and provide system fault tolerance. Thanks to DRaaS, even after a technical failure, business services can continue to operate as usual. It also provides a backup working environment, when the normal environment returns to its original state, according to the disaster recovery plan (DRP).

As more organizations move toward digital technology, downtime expenses are skyrocketing. An IBM report says that company data breaches cost approximately $3.92 million. According to Gartner, downtime costs for IT systems are about $5,600 per minute. Of course, the cost depends on the type of business the organization is engaged in and the type of ERP and CRM systems they use. For an e-commerce firm, costs can be monumental, because downtime means lost sales opportunities. In addition, regulations like GDPR impose strict security standards for dealing with customer data. Organizations must comply with legal requirements and ensure that they adopt effective disaster recovery strategies. This encourages organizations to increasingly focus on DR, since disaster recovery automation can significantly reduce the time it takes to recover systems. According to IDC, the DRaaS market may reach $4.5 billion in 2020 and increase by 15.4% by 2023.

Unfortunately, when working with data, as the amount of information increases, the consequences of data loss increase proportionally. Many smaller and mid-sized organizations are completely unprepared for a disaster resulting in lost data, and studies show that such catastrophes often force businesses to close their doors permanently, as they are unable to recover. Companies must have a comprehensive disaster recovery plan in place if they want to survive a data loss.

The development of HCI solutions

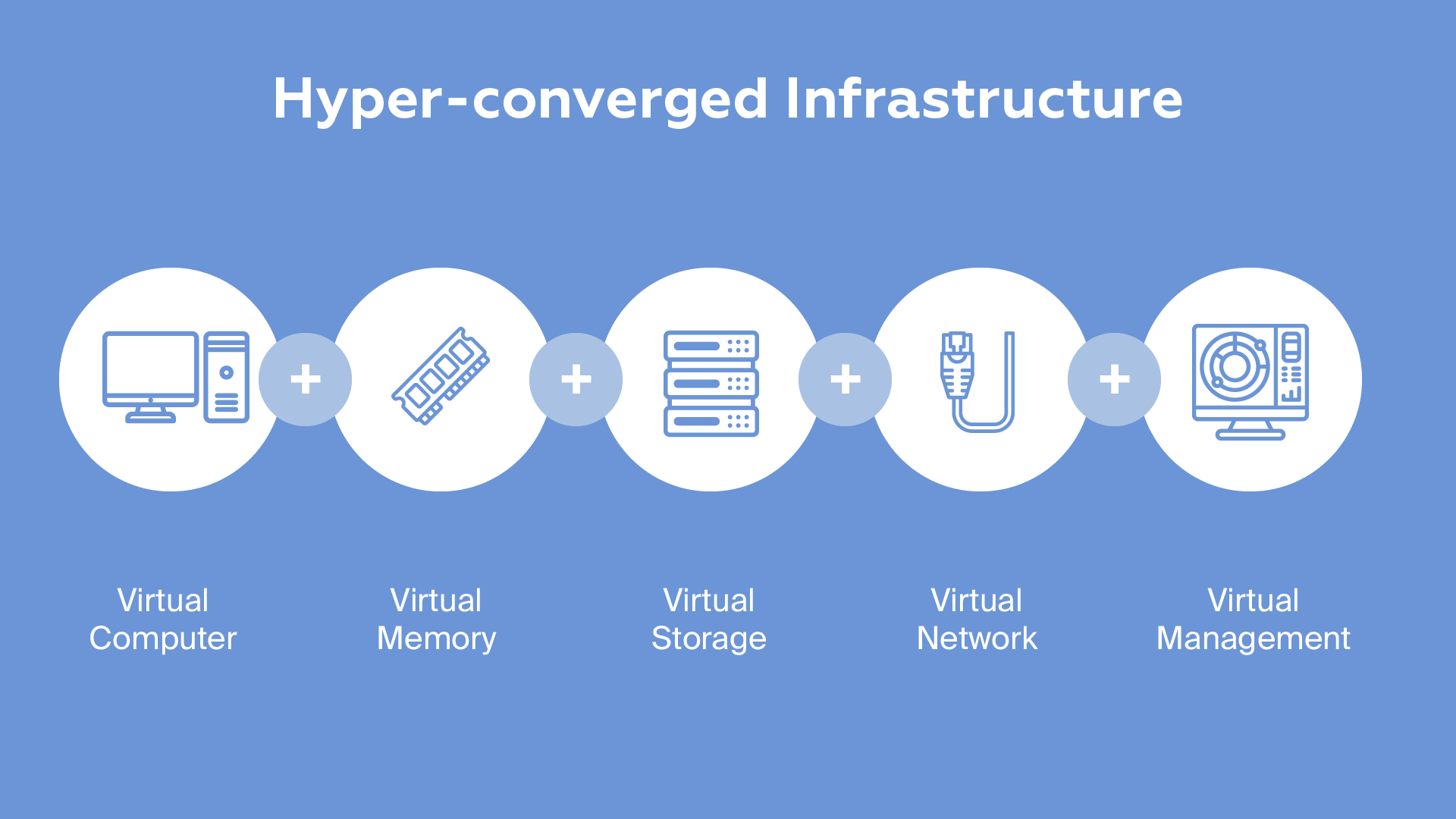

Hyper-converged infrastructure (HCI) is an infrastructure that virtualizes all elements of traditional "hardware-defined" systems. HCI integrated solutions include virtualized computing, a virtual storage network, and virtualized networks.

HCI has gained in popularity because it allows for centralized, easy monitoring, while increasing scalability. Gartner predicted that in 2020, 20% of business-critical applications will transition from their current three-tier IT infrastructure to a hyper-converged infrastructure. Mordor Intelligence, a research firm, predicts that the HCI market will grow by an average of 13% per year from 2019 to 2024. HCI solutions are increasingly becoming the optimal alternative to open-source cloud platforms, as they are easier to manage and more affordable than traditional data center systems.

HCI solutions enable developers to deploy solutions faster by linking the entire data center into a cohesive virtual infrastructure. Computers, memory, storage, networks, and their management are all virtualized, maximizing flexibility, scalability, and performance throughout the data center.

Future-proofing your enterprise

As a cloud computing service provider, Evrone is happy to partner with you to help you better understand the different types of cloud computing technologies and implement them in your company. If you want your business to develop successfully in 2021, contact us and learn how cloud computing services for businesses can benefit your enterprise.

Inevitably, AI will move from being a popular and expensive toy to being a useful tool for everyday life. Machine Learning, being a subset of AI, can lead to machines that have the ability to learn on their own. At Evrone, we are already using AI and ML and cloud technologies, even on relatively small projects.